Nate Turley

Nate is a creative technologist, technical director, and digital artist

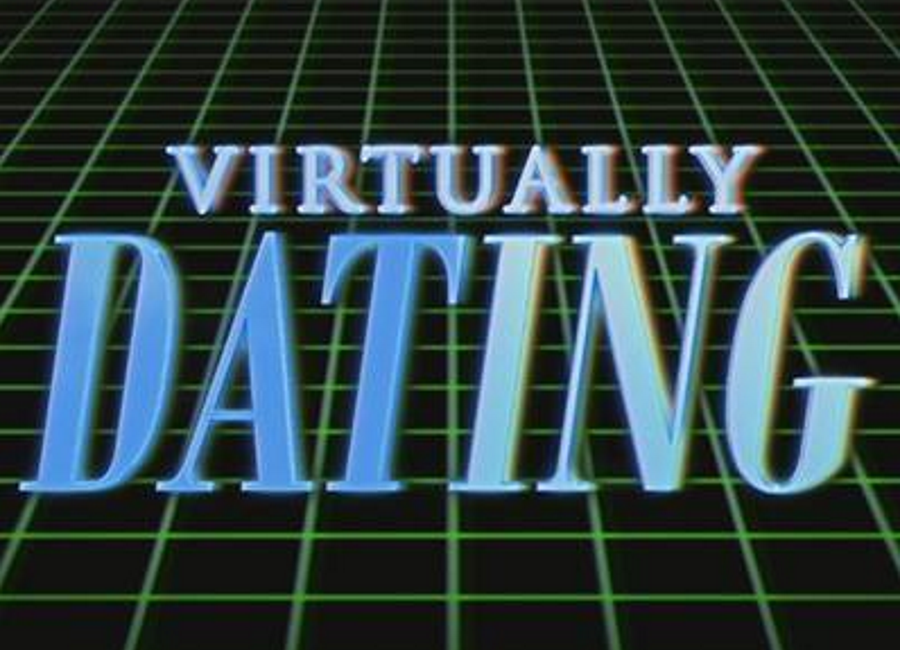

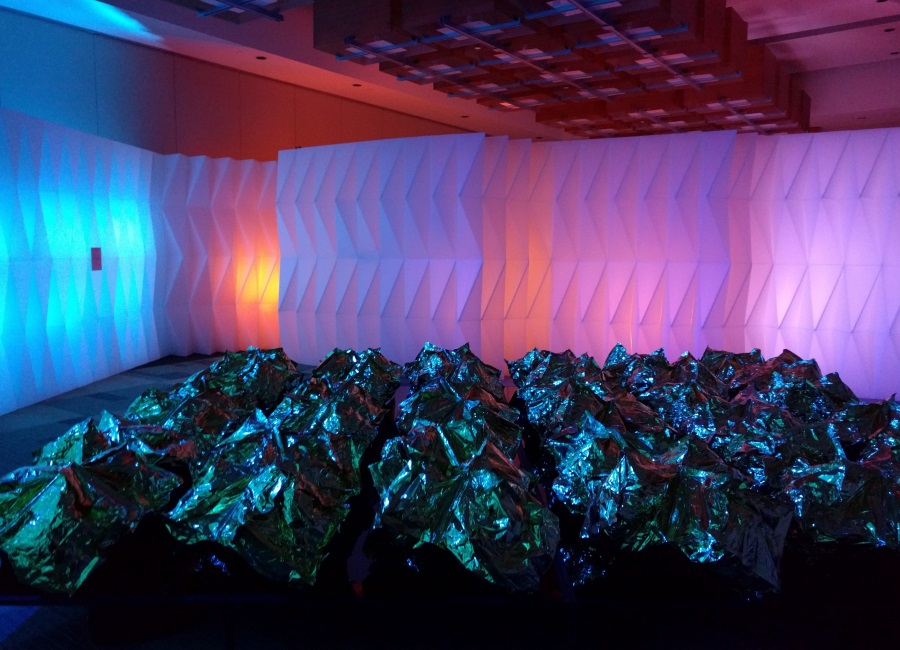

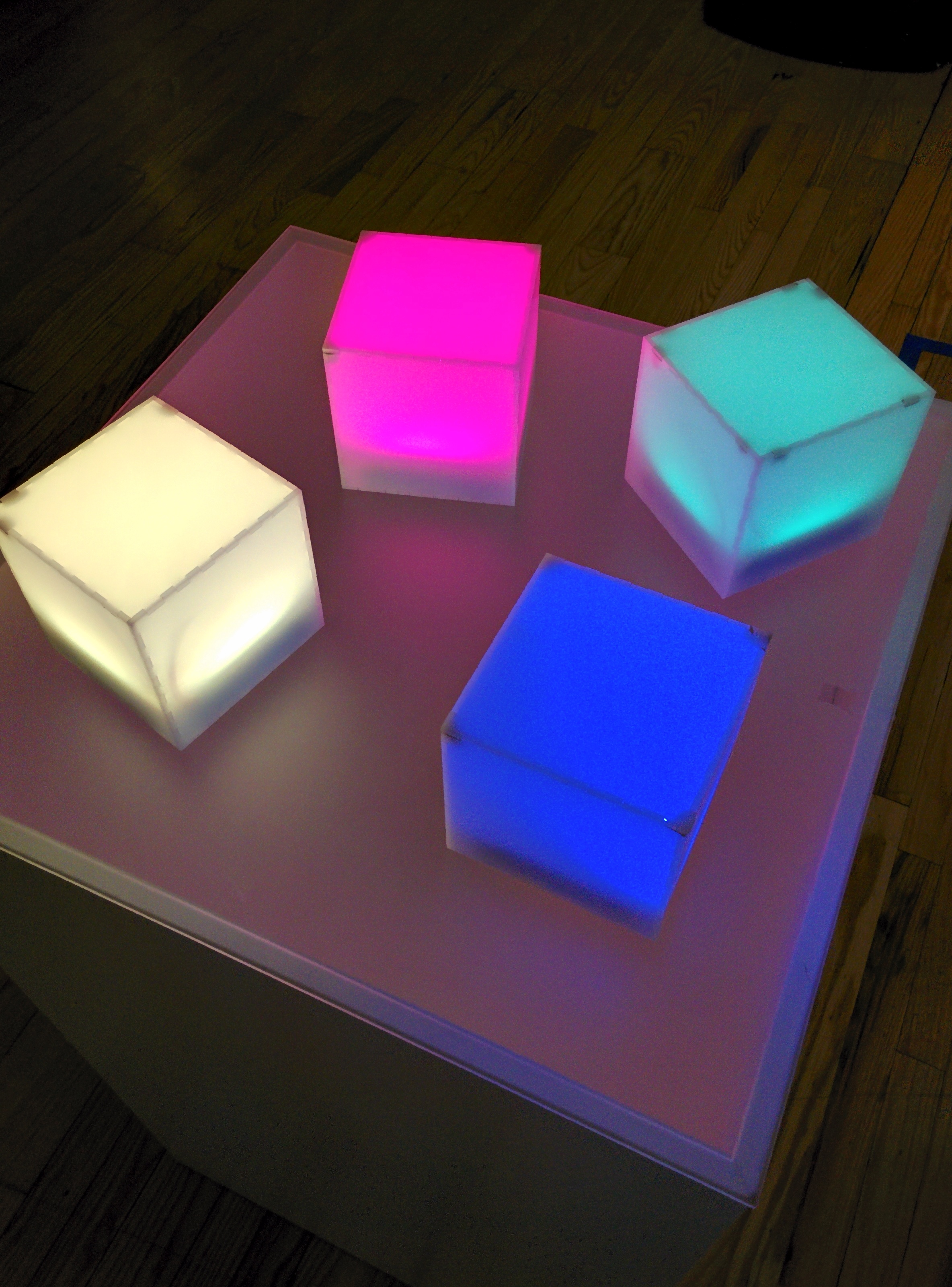

He specializes in creating custom hardware and software systems for interactive audio visual installations, digital event activations, and live performance. He holds a Bachelor of Science in Electrical and Computer Engineering from The University of Colorado

He creates work on with Unity3D, WebGL, TouchDesigner, Max/MSP, and others

When he's not on a computer he's exploring the mountains by ski touring or rock climbing

Nate is currently a Senior Tech Lead at Media.Monks where he oversees a swath of interactive and immersive projects from pitch to production